Last night I had a dream that I was leaving Campaign Monitor. Then I woke up and realised that in less than a week, this sad dream was going to come true. I’ve had such a great time working here and I’ll miss it immensely. It’s a sad truth that often, doing what’s best for you means saying goodbye to people you love.

So before I go, here’s a little look back at one of the more interesting things I implemented at Campaign Monitor – the QA newsletter.

When I first joined Campaign Monitor, there was no formal testing, let alone test reports. I needed a way to communicate test results to the team, and I also needed a way to introduce testing concepts to a company that discouraged formal meetings. So I decided to use the company’s own product to deliver the test report – I made the weekly test report into an email campaign.

Using the application under test in order to send email campaigns had several advantages. First, there was the “eat-your-own-dogfood” advantage of experiencing the product as a user, and experiencing all of the associated bugs and user experience issues associated with that. It helped me put myself in the end-user’s shoes. Also, I could take advantage of Campaign Monitor’s tracking features to tell who was actually reading the newsletters and clicking the links. So I could track the kind of impact the newsletter was having on different teams.

Reporting can often be like a marketing exercise, and the fun newsletter format of the test report made it eye-catching and easy to read. As the company culture was fun and laidback, I included some funny pictures and interesting links to keep people reading.

The first newsletter I made was using a free template from Campaign Monitor’s free templates page. As there had been no formal testing done prior to my arrival, the newsletter was a way to educate the team about testing terms, test practices and changes that I was making to the test infrastructure. This report tries to answer the questions “What is testing?” and “What are you doing?”

I had a progress update about the current project, an update about improvements made to the automation suite, an introduction to a testing terms and a link to some testing-related blog posts. I sent out an email like this once a week in the same format. Each one introduced the team to some new testing terms, and kept them updated on the changes that were being made to the development process with regards to testing. I sent the email to everybody in the company (which at the time was less than 20 people).

I received some very positive feedback about the newsletter. Using Campaign Monitor meant that I could track who opened the newsletter and clicked links. It also meant that I could test the main function of the application (sending campaigns) end to end as a byproduct of creating and sending the report.

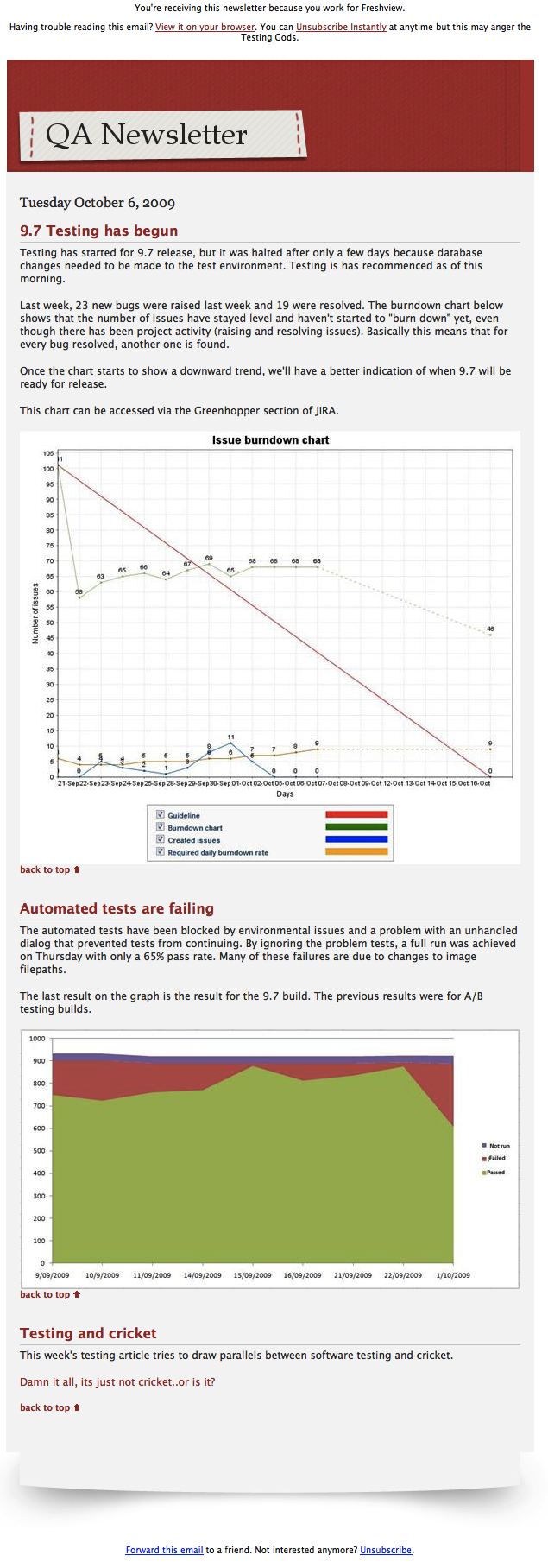

After about a month of sending these reports, I decided it was time to introduce the team to some metrics. In particular, I wanted to draw attention to the burndown chart and the automated test results. The burndown chart was visible to the team in JIRA, but hardly anybody was looking at it and most people didn’t know what it meant. So I included a screenshot of the burndown chart with an explanation of what the trend was telling us. This report tries to answer the questions “What do I need to know?” and “Why should I care?”

The team had access to the daily automation results, but I wanted to show the results trend over time as an indication of declining or increasing build quality.

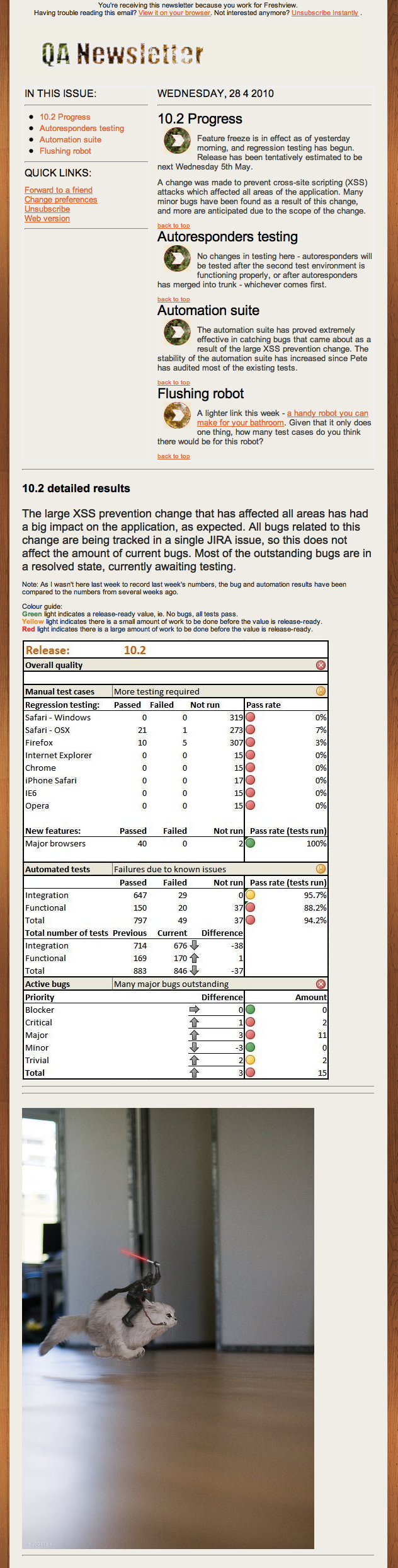

The previous report tried to answer the question “What do I need to know?” But the managers and the support team just wanted to know when we could release the product. The developers just wanted to know how much work they had left to do. So the real questions I needed to answer were “Why aren’t we done yet?” and “Why is it taking so long?”

Project progress is still the star of the show in this report. Freshview values quality over deadlines, and this shows in the language of the progress report. When the development culture is “It’s done when it’s good enough to release”, the question “Is it good enough yet?” becomes the deciding factor for the release date.

The detailed results are an attempt to show progress towards that “good enough” goal, and to answer the questions “Why aren’t we done yet?” and “Why is it taking so long?”

Then we moved the above to a dashboard, so this part of the newsletters became redundant . The dashboards evolved too, and the test case and bug counts were removed completely. You can read more about this in this article written for The Testing Planet: Dash Dash Evolution.

So the newsletter became more of a general project update, as well as a way to try out new email templates and show the team what they looked like. You have to remember that this is a team that has one meeting a week, private offices and rare, if any, standups. This was filling a communication gap.

This newsletter started answering the question “what are the testers doing?”. We started photographing the dashboard. With smaller teams, communication was better so formal reporting was required even less. This was more of a way to let support know what was in the works.

It was pretty obvious by this point that the dashboard was making the newsletter kind of redundant, so all that was required was a brief progress update.

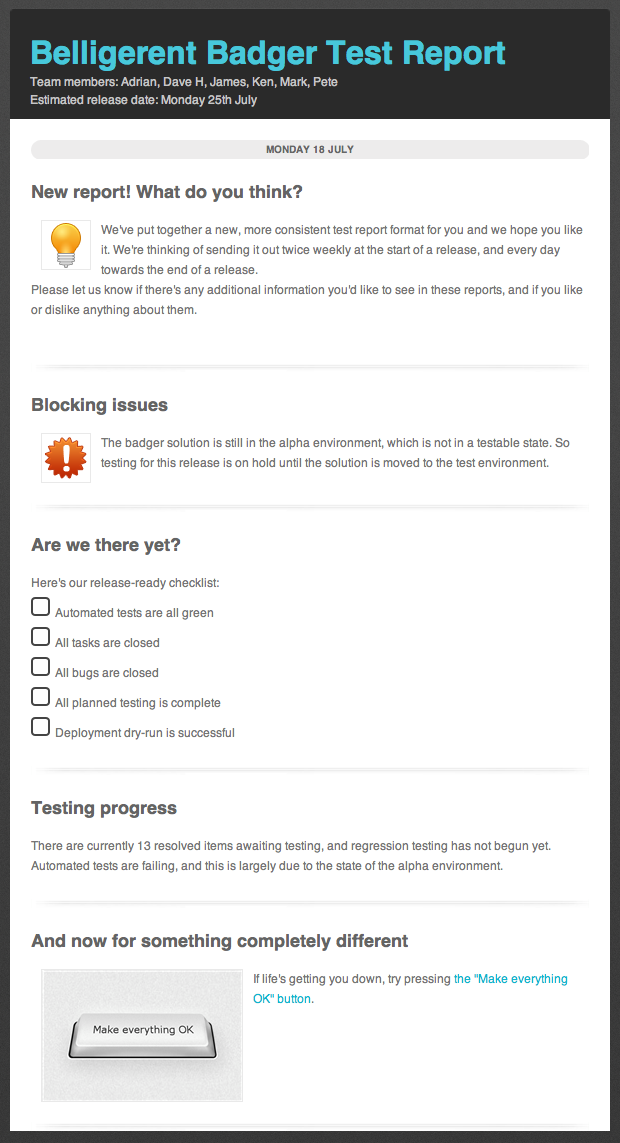

We had a brief attempt to revive the format, and make a project-specific test report. This one has a checklist, a highlighted section for blocking issues and a little paragraph about progress. We found that reporting this kind of progress on a weekly basis was a bit pointless. At the start of the project, nothing would be checked off the checklist. Towards the end of the project, the checklist would be complete within a matter of days. So while the checklist may have been useful, the newsletter was the wrong medium for this kind of information.

In the end I realised that the reports had outlived their usefulness. The initial reports had achieved their goal of creating awareness of testing practices when testing was still new. The next few reports had kept the support team in the loop about releases, and later this was replaced by having the support lead attend weekly developer meetings, and having developers make more of an effort to notify support about upcoming releases. Moving the testers’ offices physically closer to the developers’ offices also helped a lot – most discussions were happening in the hallway outside developers’ offices, so we could actually be a part of those meetings.

So we don’t send the newsletters anymore. But they are a great example of an idea that worked for the right contexts at the right times. Sometimes throwing something away is progress too.

One thought on “The evolution of the QA newsletter”

Hi Trish,

best of luck for the future! The above is not only a testament to your fantastic calibre but also to the asset you will be to any company in the US.

You’ve inspired me to put this on my 7summits list.

All the best,

– Richard

Comments are closed.